Cancer biology gets the supercomputing treatment on Oak Ridge’s Summit and Lawrence Livermore’s Sierra.

Super-connected HPC

The superfacility concept links high-performance computing capabilities across multiple scientific locations for scientists in a range of disciplines.

Turbocharging data

Pairing large-scale experiments with high-performance computing can reduce data processing time from several hours to minutes.

Borrowing from the brain

Nature inspires Oak Ridge National Laboratory algorithms for neuromorphic processors.

A quantum bridge

Sandia National Laboratories researchers seek to connect quantum and classical calculations in a drive for a new supercomputer paradigm.

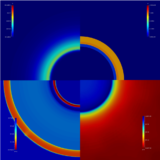

Banishing blackouts

An Argonne researcher upgrades supercomputer optimization algorithms to boost reliability and resilience in U.S. power systems.

Scaling up metadata

A Los Alamos National Laboratory and Carnegie Mellon University exascale file system helps scientists sort through enormous amounts of records and data to accelerate discoveries.

Beyond the tunnel

Stanford-led team turns to Argonne’s Mira to fine-tune a computational route around aircraft wind-tunnel testing.

Efficiency surge

A DOE CSGF recipient at the University of Texas took on a hurricane-flooding simulation and blew away limits on its performance.

Robot whisperer

A DOE computational science fellow combines biology, technology and more to explore behavior, swarms and space.

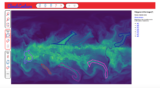

Labeling climate

A Berkeley Lab team tags dramatic weather events in atmospheric models, then applies supercomputing and deep learning to refine forecasts.

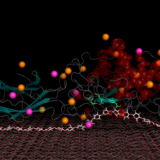

Molecular landscaping

A Brookhaven-Rutgers group uses supercomputing to target the most promising drug candidates from a daunting number of possibilities.

Forged in a Firestorm

A Livermore team takes a stab, atom-by-atom, at an 80-year-old controversy over a metal-shaping property called crystal plasticity.

Visions of exascale

Argonne National Laboratory’s Aurora will take scientific computing to the next level. Visualization and analysis capabilities must keep up.

ARM wrestling

Aiming to expand their technology options, Vanguard program researchers are testing a prototype supercomputer built with ARM processors.

Revving up chemistry

Exascale computing, combined with redesigned computational chemistry software, could help researchers develop new renewable energy materials and greener chemical processes.

Multiphysics models for the masses

Los Alamos tool lends user flexibility to ease use of stockpile-stewardship physics simulations.

Genome-crunching

Supercomputing power and increasing genomic data are allowing Oak Ridge researchers to examine drought tolerance in plants and other big biological questions.

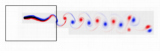

Swimming lessons

A Berkeley Lab-Northwestern University team follows fish movements to build energy-efficiency algorithms.

Scaling the unknown

A supercomputing co-design collaboration involving academia, industry and national labs tackles exascale computing’s monumental challenges.

Meeting the eye

A Brookhaven National Laboratory computer scientist is building software to help researchers interact with their data in new ways.

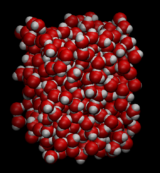

Water works

Complex simulations are unraveling the idiosyncrasies of this unique liquid’s behavior.

Connecting equations

A Sandia National Laboratories mathematician is honored for his work creating methods for supercomputers.

Enhancing enzymes

High-quality computational models of enzyme catalysts could lead to improved access to biofuels and better carbon capture.

Cloud forebear

A data-management system called PanDA anticipated cloud computing to analyze the universe’s building blocks.