Cosmologists have long struggled with facts they can’t explain.

In the 1920s, they discovered the universe has been expanding since the Big Bang, the enormous explosion that gave birth to the cosmos about 13 billion years ago.

But since the 1930s, they’ve known that visible matter doesn’t have enough gravitational pull to hold the galaxies together. So the universe must also contain some invisible stuff, dubbed dark matter.

In the late 1990s, they discovered that the galaxies are not coasting under momentum from the Big Bang. Defying the laws of motion and gravity, the galaxies actually are moving faster. The cause of this acceleration, now called dark energy, is considered the greatest mystery of cosmology.

Some would like to revive Albert Einstein’s famous cosmological constant. Einstein introduced the constant into his equation for general relativity to match the prevailing concept of the time: a static universe. He retracted the constant after the discovery that the universe is expanding.

The universe now seems to need the cosmological constant, or something like it, to explain its accelerating expansion. Besides the cosmological constant, scientists have imagined a variety of forces that might have been suppressed early on and unleashed later to speed the galaxies.

“The origin of this dark energy is unknown, and in fact it could be that the accelerated expansion is actually due to a modification of Einstein’s theory of gravity on very large scales instead,” says Katrin Heitmann, a physicist and computational scientist at Argonne National Laboratory (ANL).

Heitmann is principal investigator of the ANL part of Computation-Driven Discovery for the Dark Universe, supported by DOE’s SciDAC program (for Scientific Discovery through Advanced Computing). Under the direction of Argonne’s Salman Habib, the multi-laboratory project is developing the computational and statistical tools supercomputers can use to help solve the dark-energy mystery. SciDAC is part of the Advanced Scientific Computing Research program in DOE’s Office of Science.

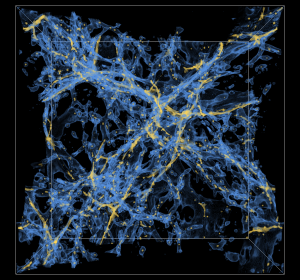

The project dovetails with the work of cosmologists who study dark energy. The earliest data come from the oldest light in the universe, the Cosmic Microwave Background (CMB). Filtering to Earth between the stars and galaxies, this energy is left over from the universe’s infancy, some 380,000 years after the Big Bang. Tiny temperature differences throughout the CMB reveal random disturbances – sound waves – that reverberated through the early cosmos. In short, the CMB depicts the initial conditions for the structures of matter today – stars, galaxies, galaxy clusters and the much more massive dark matter halos and filaments that make up the cosmic web.

Scientists have been using specialized telescopes to amass data about the CMB and the universe’s visible matter in search of clues to dark matter and dark energy. They’re planning still more data-gathering projects.

Two projects will create the most detailed maps to date showing the positions of galaxies and gas clouds. The first, in progress, is the Baryon Oscillation Spectroscopic Survey (BOSS). It’s the latest stage of the Sloan Digital Sky Survey (SDSS), a collaboration of 27 universities and other research institutions. Baryons comprise ordinary, visible matter; oscillation refers to the ancient sound waves the CMB revealed; and spectroscopy is the detailed study of light. The project is creating a large-scale map of galaxy distribution.

The next SDSS stage, the Mid-Scale Dark Energy Spectroscopic Instrument (MS-DESI), is set to begin in 2018. Run by DOE’s Lawrence Berkeley National Laboratory, MS-DESI will create the largest three-dimensional map of the universe.

To expose the invisible hand of dark matter in the BOSS and MS-DESI maps, computational scientists will simulate the birth and growth of the universe tens of thousands of times, portraying the influence of different possible dark-energy concepts. The calculations will give cosmologists ideas on where to seek clues in astronomical surveys.

Heitmann runs one simulation project, “MockBOSS: Calibrating BOSS Dark Energy Science with HACC.” A second project, “Simulating Cosmological Lyman-α Forest,” is headed by Zarija Lukić, a physics project scientist at Berkeley Lab.

MockBOSS will deploy a new N-body code called HACC (Hybrid/Hardware Accelerated Cosmology Code). N-body codes simulate the complicated movements of objects as they interact through their mutual gravitational pulls.

“HACC will model the evolution of matter over time into the cosmic web,” Heitmann explains. “In post-processing, galaxies are added using a halo-occupation distribution model. This model is calibrated against observations.”

For two years in a row, the code has been a finalist for the Association for Computing Machinery’s prestigious Gordon Bell Prize recognizing outstanding achievement in high-performance computing.

The largest cosmological simulation runs on Mira, a Blue Gene/Q at the Argonne Leadership Computing Facility. The cube-shaped volume of space in the simulation measured 15 billion light years on a side. If that block of space were shrunk to fit into Arizona’s kilometer-wide Meteor Crater, the disk of our Milky Way galaxy – 100,000 light years from edge to edge – would be only 2 millimeters wide, the diameter of a No. 2 pencil lead.

The simulation can reveal details with a resolution of about 23,000 light years. That’s roughly the distance from Earth to the center of the Milky Way, or one-half millimeter on the Meteor Crater scale. Working with a trillion particles, each representing a mass equivalent to a billion suns, the HACC simulation was more than three times larger than any prior model.

With HACC, “you can investigate the effects of different cosmological scenarios,” Heitmann says. “You can change properties of the dark matter and dark energy for example and investigate how best the resulting differences can be observed by the surveys.”

BOSS and MS-DESI – and therefore HACC simulations – target luminous red galaxies, bodies bright enough to be seen from about 6 billion light years away and bearing evidence of their distance and velocity in their light.

Lukić’s project will study light that comes from even farther away – from quasars, the brightest objects in the universe. A quasar is thought to be the core of a so-called active galaxy, where a supermassive black hole drags stars, dust and any other material within reach to an implosive death. Quasars shine from as far as 11.8 billion light years away.

The electromagnetic energy a quasar emits spans the spectrum, from radio waves to gamma waves. As the energy travels through space, the waves are continuously stretched to longer wavelengths, or redshifted, by the universe’s expansion. At the same time, the energy passes through clouds of hydrogen gas. Each cloud robs the emission of a specific wavelength of ultraviolet light. When astronomers examine the spectrum, the missing wavelength shows up as a dark strip called a Lyman-alpha line. If the energy passed through several hydrogen-containing clouds and was redshifted in between, then Lyman-alpha lines are spaced out like a stand of trees, a so-called a Lyman-alpha forest. Each line records the distance of a different cloud.

“Each quasar we observe gives us about 1 billion light years of skewer – a one-dimensional map (of the clouds) in that direction on the sky,” Lukić says.

BOSS will take spectra from about 160,000 quasars. MS-DESI will hunt up about a million. “Having so many one-dimensional skewers will enable us to reconstruct a three-dimensional distribution of neutral hydrogen in the universe, about 10 billion to 12 billion light years away from us,” he says.

For his simulations, Lukić will use a code named Nyx on Hopper, a Cray XE6, and Edison, a Cray XC30, both at the National Energy Research Scientific Computing Center. The code’s lead developer is Ann Almgren in Berkeley Lab’s Center for Computational Sciences and Engineering. Like HACC, Nyx is an N-body code, but also incorporates hydrodynamics. It treats dark matter as a pressureless fluid and visible, baryonic matter as an ideal gas. Nyx can follow gas distribution in the simulation, while HACC focuses on the dark-matter distribution. Nyx incorporates richer physics that are important on smaller scales but also simulates smaller volumes because it demands more computing power.

Lukić says the various studies may finally converge on dark energy’s identity. “Having a good map of what primordial fluctuations are via the CMB, what the cosmic web is now via galaxies and clusters of galaxies, and also intermediate times via the Lyman-alpha forest,” he says, “will enable us to understand better what dark energy can be, and what models can reliably be ruled out.”