Machine learning methods show remarkable powers of prediction. But statistical guesses aren’t sufficient for most real-world scientific problems that require rigorous mathematics and incorporate physical principles.

Jerry Liu works at the intersection of pure mathematics and these cutting-edge artificial intelligence tools. He focuses on improving these methods in areas such as differential equations, physics and identifying trends in regularly occurring events.

That work includes a variety of questions, such as examining if large models can learn to solve challenging questions in fluid dynamics or achieve the high-precision computation needed for engineering. He’s also investigating why standard machine learning training methods and optimization strategies can be inaccurate in scientific applications.

Liu grew up in Menlo Park, California, just a short drive from Stanford University, where he’s now a fourth-year computational math Ph.D. student. Early on, the power of abstraction drew him in, the idea that the messy, complicated world can sometimes be explained through elegant structures and surprising connections.

“What attracted me most to math were these sparks where two ideas from completely different universes suddenly meet,” he says. “It’s magical.”

Those sparks guided Liu to Duke University, where he majored in mathematics and computer science and discovered a passion for topology. But Duke also exposed him to a culture of interdisciplinary exploration, and his focus began to drift toward the emerging world of machine learning. A turning point came when he encountered StyleGAN2 — NVIDIA’s breakthrough generative model, released in 2020, capable of producing stunningly realistic human faces.

“I spent a couple months just playing with it,” he recalls. “It was the first time I saw machine learning leap beyond what anyone expected.”

His newfound interest brought him to Cynthia Rudin’s lab, where he explored inverse problems and generative models, including early work with generative adversarial networks, or GANs, for unblurring faces. At the same time, Rudin’s work was grounded in classical statistics. The collision of those two worlds, the theoretical and the empirical, captured Liu’s imagination.

“These generative models worked unbelievably well,” he says. “And yet we don’t have the mathematical tools to explain why. I found that both crazy and very exciting.”

His search for deeper computational foundations led him to a summer internship during his senior year at Lawrence Livermore National Laboratory, where he worked on geometric optimization and numerical schemes for fluid simulations.

‘That practicum shaped everything I’ve done since.’

By the time Liu arrived at Stanford in 2022, he knew exactly what he wanted to study: the interface between scientific computation and machine learning. He received a Department of Energy Computational Science Graduate Fellowship (DOE CSGF) and joined Chris Ré’s lab, one of the rare research groups working across the full stack, from hardware-efficient systems design to theoretical advances in algorithms and architectures.

“He’s one of the few people who does everything,” Liu says. “And the lab has students working at every level of that pipeline.”

Many of the ideas Liu is exploring at Stanford connect to his DOE CSGF practicum at Lawrence Berkeley National Lab in 2023. With mentors Michael Mahoney and N. Benjamin Erichson, he applied large-scale machine learning methods to scientific datasets, exploring generalization across domains.

“That practicum shaped everything I’ve done since,” he says. “It was the first time I could seriously explore how language-model ideas might apply to real scientific systems.”\

One of Liu’s recent projects explores whether machine learning models can store knowledge efficiently, without today’s energy-intensive, brute-force training. “It seems very dumb,” he says, “that to teach a model a simple fact — like the capital of France — you have it take millions of tiny updates until it finally figures it out.” He and his collaborators have developed a module that can encode factual associations into a neural network and slot it into a larger model, bypassing the learning loop. Their early results show that models can absorb these pre-built knowledge structures with almost no performance loss.

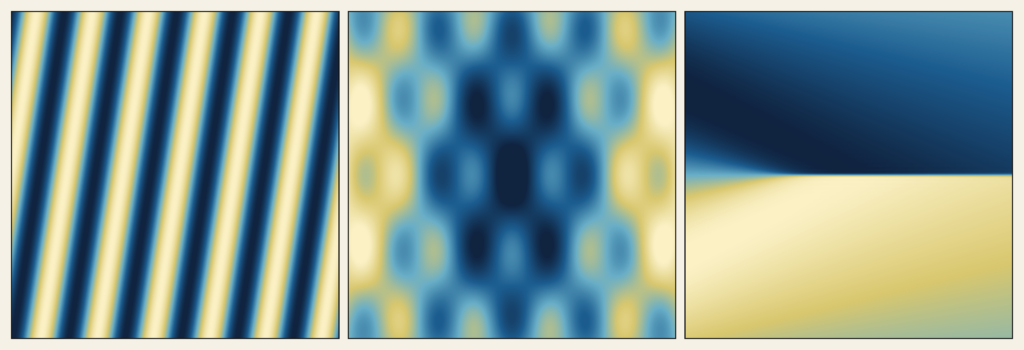

At the same time, Liu is tackling the challenge of using machine learning for numerical accuracy — an essential requirement for scientific simulation. In one project, his group studied why standard training fails even on simple least-squares problems that involve fitting a line through a set of data to derive a best-fit model, which can be useful for estimating a home’s value by fitting a line through house sale prices as a function of square footage. The conclusion: repurposed tricks from language models aren’t sufficient for optimizing scientific machine learning. The field needs new architectures and procedures. These insights have motivated his contributions to BWLer, a method that won a Best Paper Award at the 2025 Conference on Learning Theory’s Workshop for the Theory of AI for Scientific Computing. That work helps illuminate the tradeoffs of physics-informed neural networks’ precision versus their sensitivity to erroneous input data.

As Liu looks ahead, he plans to spend the next year and a half pushing toward a working large-scale model that eschews pattern recognition for scientific reasoning. “I’d love to train a model on fluids data and really see how far we can take it,” he says.

The DOE CSGF has facilitated much of this work, not only by funding his research but by giving him access to world-class computational resources. Liu runs nearly all his research on Perlmutter, Berkeley Lab’s powerful supercomputer.

Outside the lab, Liu grounds himself with music. He has played piano for two decades and often retreats to Stanford’s practice rooms for a break from research. “It’s my way of relaxing,” he says.